Meta Accelerates Its Chips to Renegotiate Power in AI

On March 11, 2026, Meta announced a roadmap for its proprietary AI chips, known as the Meta Training and Inference Accelerator: MTIA 300, MTIA 400, MTIA 450, and MTIA 500. The critical operational detail is not just the names, but the pace: approximately one new chip every six months, with full deployment expected by the end of 2027. The MTIA 300 is already in production in data centers, running training for ranking and recommendation in feeds; the MTIA 400 is nearing deployment and extends to more AI workloads, including generative AI inference; MTIA 450 and 500 push that generative inference at scale toward 2027. This comes alongside a multi-year agreement signed on February 18, 2026, to purchase millions of chips from Nvidia, including current and future GPUs and CPUs.

Superficially, this seems contradictory: buying massively from Nvidia while announcing independence. However, as an industrial strategy, it reveals a power architecture. Meta aims to turn one of its largest sources of spending and risk—AI computing capacity—into a negotiable asset: in-house capacity for specific workloads and external purchases for elasticity and coverage.

As a strategist, I interpret this as a move to redistribute value within the supply chain: who captures the margin, who bears the supply risk, who pays for price volatility, and who retains optionality when models change.

A Proprietary Chip Does Not Compete with Nvidia, It Competes with Your Bill

The usual narrative of "technological sovereignty" often obscures the essentials: the proprietary chip is not justified out of pride; it is justified by unit economics. The announcement gives concrete clues. Meta asserts that its MTIA chips achieve greater efficiency than commercial GPUs for its ranking and recommendation models, balancing computing power, memory bandwidth, and capacity according to internal needs. Translated into financial terms, the goal is not to win a public benchmark but to reduce costs per training and inference for the models that consume the most compute hours.

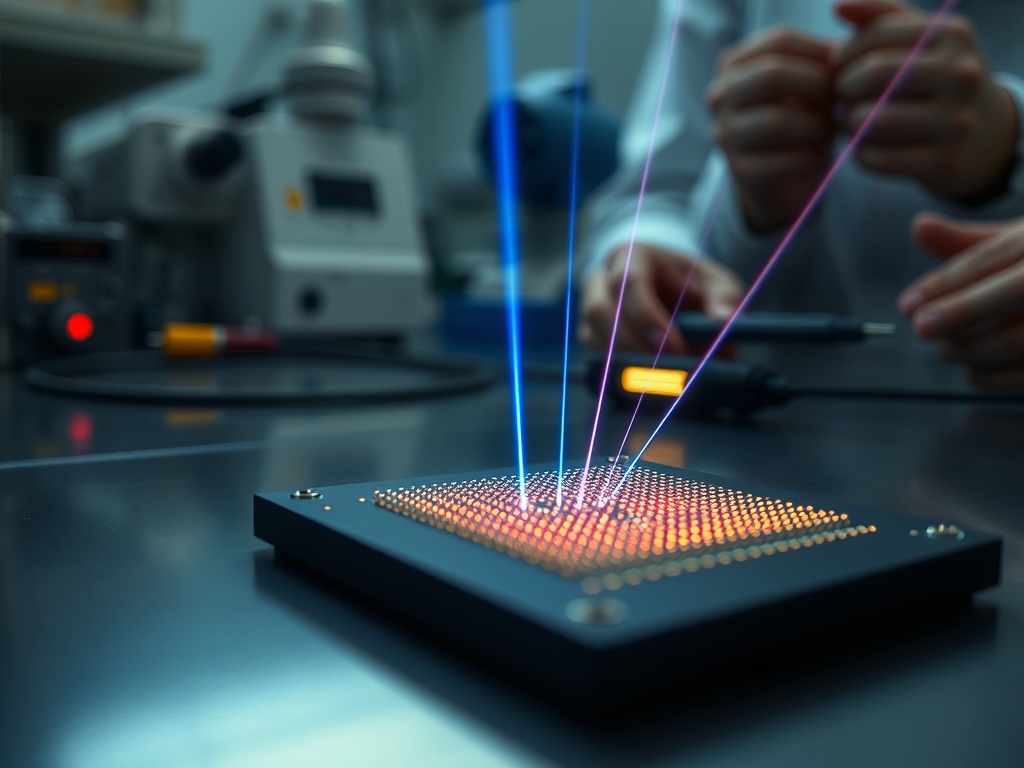

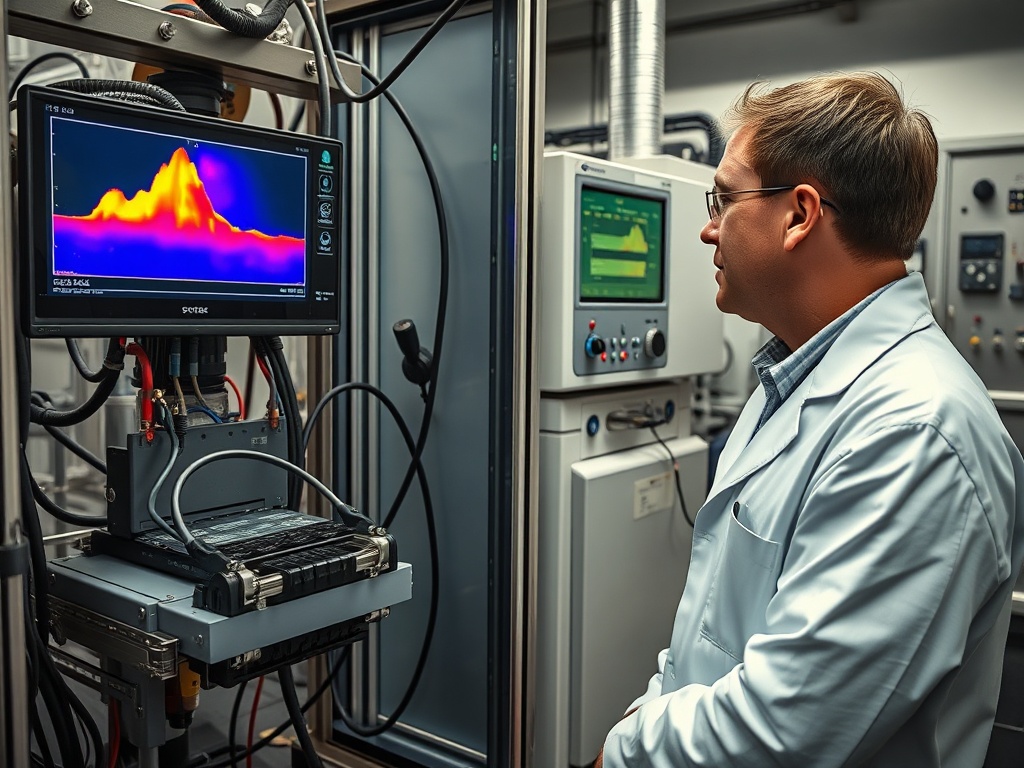

In a company whose business relies on recommendations, each point of efficiency has a multiplicative effect. If an in-house accelerator achieves the same performance with less energy, fewer machines, fewer racks, or less time, the savings are not linear: they also alleviate pressure on electrical infrastructure, cooling, and data center expansion. The briefing mentions validation in a chip lab, where chips are tested at the chip-rack level and workload before deployment on servers with liquid cooling. This investment in validation is costly, but it seeks one thing: predictability. Predictability is what allows planning for capacity, negotiating supply contracts, and avoiding preventive over-purchases that tie up capital.

At the same time, Meta is not saying "goodbye, Nvidia." It is securing supply with a multi-year agreement for millions of chips. This duality is rational: proprietary hardware is efficient when the software and workload are stable and repeatable; GPUs remain the safety net for spikes, architectural changes, and generalized workloads. The desired outcome is a bill less exposed to market prices and scarcity.

Independence here is not binary. It is a curve. Each generation of MTIA that enters production shifts a portion of the expenditure from a price-powerful supplier to an internal platform where Meta controls design and timing.

Semi-Annual Cadence and Validation Lab: The Advantage is Industrial Speed

Meta is accelerating the design and deployment cycle: introducing a new generation every six months through 2027. In semiconductors, this is a statement of intent. The generative AI market is punishing companies that plan infrastructure as if models change every three years. Here, Meta attempts to align the cadence of silicon with the pace of the product.

The briefing provides a detail often overlooked: the lab validates chips coming from manufacturing, with performance, cost, and consumption tests before transitioning to servers with liquid cooling. This sequence suggests that Meta is building internal capacity that is not just about "designing an ASIC," but operating a logical factory within the organization: specification, verification, testing, rack integration, and large-scale deployment. Without this chain, proprietary chips are merely PowerPoint presentations.

However, the semi-annual cadence presents tension. Accelerating means making decisions with less learning time in production. Meta has already faced, historically, delays concerning internal goals and responded with acquisitions to add talent. This aligns with the main risk: it is not just about designing a chip; it is about making it repeatable without compromising reliability or compatibility with the software stack.

From a distributive perspective, speed has a secondary effect: it reconfigures negotiations with suppliers. A buyer who can replace part of their demand with in-house capacity gains maneuverability. They don't need to abandon Nvidia or AMD; they need to come to the table with a credible alternative. In markets with dominant shares and bottlenecks, a credible alternative is what prevents paying a "urgency price."

Simultaneously, the entire industry is moving this way: Google with TPUs, AWS with Trainium and Inferentia, Microsoft with Azure Maia. Meta is not innovating the pattern; it is speeding it up on its own timeline.

The Bet on MTIA Reshuffles Winners and Losers in the Value Chain

When a company vertically integrates AI accelerators, the redistribution of value is not abstract. Four accounts change: computing price, energy cost, supply risk, and technological dependence.

For Meta, the most direct upside is capturing part of the margin that previously went to GPU suppliers for workloads where Meta has high repetition: ranking, recommendations, and progressively, generative AI inference. If the MTIA 300 already runs training for feeds, Meta is starting where it has volume and clear requirements. It then extends with MTIA 400 toward generative inference, and with MTIA 450/500 toward scaling generative inference by 2027. This progression is sensible: first addressing what pays the bill daily, then focusing on what defines the future of the product.

For suppliers like Nvidia, the effect is mixed. On one hand, Meta signs an agreement to purchase millions of chips and secures demand. On the other hand, Meta reduces the "captive" portion of its purchase. In the long run, this disciplines prices in the segments where the proprietary chip competes. Nvidia maintains its advantage where generality and software weigh more, but loses the ability to capture the entire surplus in specialized workloads.

For the data center ecosystem, there appears to be a hidden cost: operating proprietary hardware requires talent and processes that compete for internal resources. The calculation is not just CAPEX; it also involves organizational focus. Meta anticipates a high CAPEX in 2026, projecting over $40 billion, primarily oriented toward data centers. When CAPEX rises, specification errors become costly, and the discipline of validation becomes a strategic function.

For users and advertisers, the impact materializes indirectly. If Meta reduces costs per inference, it can serve more complex or more frequent models without passing all the pressure onto total spending. This sustains product performance. Efficiency is not just about savings; it also means the capacity to experiment more cheaply.

The most serious risk is that the narrative of independence pushes toward excessive integration. A proprietary design is an asset when it enhances a workload; it is a burden when it forces software to adapt to hardware instead of vice versa. The news does not provide public benchmarks or savings figures, so the final evaluation will depend on production data and whether the semi-annual cadence maintains quality.

Infrastructure Independence is Purchased with Discipline, Not Announcements

Meta is utilizing MTIA for a specific goal: to secure capacity and lower costs in a market where demand for accelerators does not wane and investment in data centers speeds up towards 2027. The briefing mentions estimated annual spending on AI data centers exceeding $200 billion by 2027 and also notes that in 2025, H100 units surpassed $40,000. Without needing to extrapolate its own numbers, the incentive is clear: when the cost of a commodity becomes strategic and volatile, the company seeks two things: diversification and control.

What's interesting is that Meta is not trying to replace the major providers; it is attempting to reposition the relationship. They purchase millions of external chips to ensure scale and continuity, while simultaneously accelerating their internal capacity to avoid paying the "scarcity tax" in workloads where they can specialize. This combination is a design for financial resilience: reducing dependence without betting the operation on a single path.

The typical blind spot in these programs is confusing "proprietary chip" with "sustainable advantage." The advantage arises if the hardware improves the total cost of servicing models, if the timing aligns with product evolution, and if the organization executes integration smoothly. The validation lab and the active deployment of the MTIA 300 are signals of execution, not just intentions.

The distribution of value that emerges is clear: Meta captures more surplus by converting specialized computing into in-house capacity; suppliers lose part of their price power in those workloads, although they compensate with generalized volume; the energy and data center bill becomes more manageable if efficiency is sustained. In decisions like these, those who manage to keep all actors preferring to stay win: Meta if it delivers volume and predictability to its suppliers while reducing dependence, and suppliers if they continue being the bridge of elasticity that proprietary silicon cannot replace.