AI Agents for Businesses: Who Sets the Rules of the Game

On February 24, 2026, Anthropic announced its enterprise agent program: a set of pre-built modules to deploy its Claude model in finance, human resources, legal, and engineering tasks, connected with tools like Gmail, DocuSign, and data platforms. The announcement was precise and technically sound. The promise, articulated by its U.S. director Kate Jensen, aims to correct the focus—rather than effort—that characterized 2025. This time, AI is genuinely set to operate within real corporate workflows.

With 100 million monthly downloads of its standardized connection protocol, and Claude Code scaling to become a billion-dollar revenue product in just six months, Anthropic is not speculating; it's executing. When a company of this magnitude makes substantial moves, business leaders have two choices: read the press release or delve into the underlying architecture.

I choose the latter.

The Promise is Modular, So is the Risk

Anthropic's model is smart from a product engineering standpoint. Instead of selling a monolithic platform that every company has to adapt from scratch, it offers functional modules—one agent for financial modeling, another for creating job descriptions, another for technical specifications—that IT teams can customize within controlled internal markets. Matt Piccolella, their product lead, summarized it well: each person should have their custom agent, managed centrally.

This modular architecture transforms costs that were once fixed—consultants, licenses for specialized software, custom developments—into variable deployments that scale according to usage. For small and medium-sized enterprises (SMEs) with limited IT resources, this represents a significant shift in financial logic. Companies can stop paying for installed capacity and start paying for operational results.

However, modularity has a dark mirror: each module inherits the assumptions of its designer. An HR agent generating job postings, onboarding materials, and offer letters is not neutral. It reflects an implicit idea of what a desirable candidate is, what language is professional, and what career structure is the norm. These assumptions are not visibly embedded in the code. They exist in the data on which the model was trained and, even before that, in the decisions of those who chose that data.

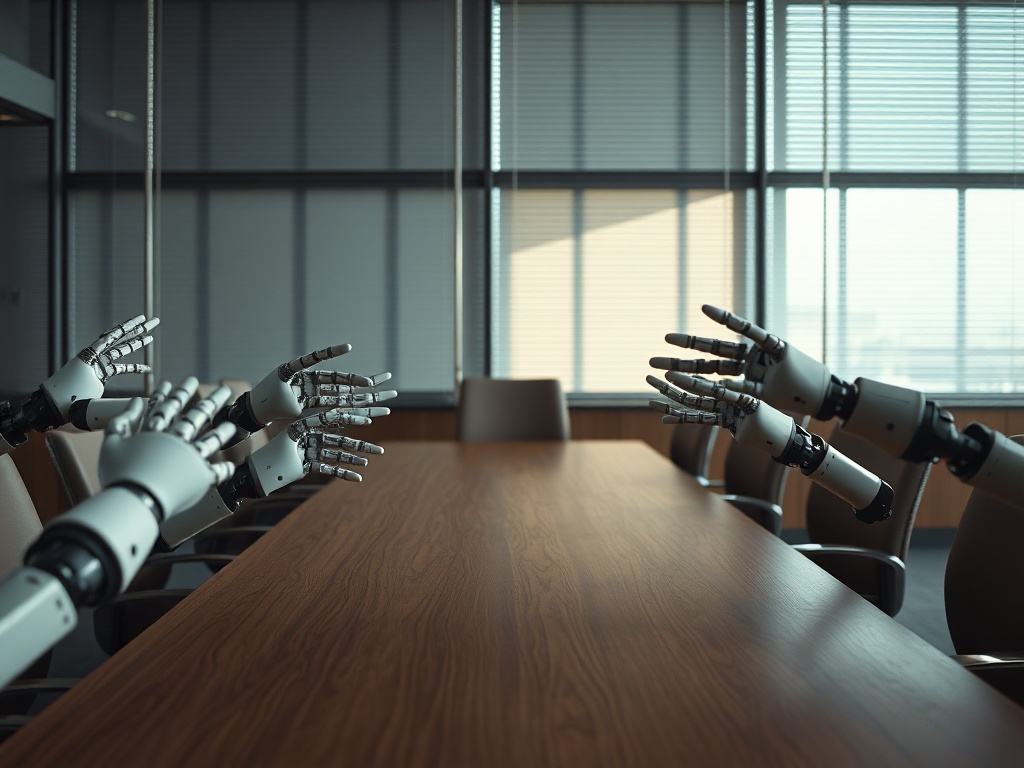

The question that no press release addresses is: who was in that room?

When the Blind Spot is Automated at Scale

One piece of data from Anthropic’s briefing deserves direct attention: analysis of millions of human-agent interactions shows that almost 50% of agent activity centers on software engineering. Finance, healthcare, and cybersecurity emerge as secondary sectors. This means that the usage patterns informing the system’s design—the behaviors that the model learned as "normal" and efficient—come from a very specific user profile.

Software engineering teams are, demographic-wise, among the most homogeneous labor segments in the global economy. When this profile generates 50% of the training signal for a system set to be deployed in HR, finance, and legal—functions that manage hiring, compensation, credit, compliance—the homogeneity of the origin pollutes the breadth of the outcome.

This is not an accusation against Anthropic. It’s a structural diagnosis applicable to the entire industry and should be incorporated into the risk assessment by corporate leaders adopting these tools. An AI agent automating the screening of resumes in an HR department can significantly reduce time in the process. It can also industrially replicate biases that previously took weeks to manifest. Efficiency gains and bias risks do not cancel each other out: they multiply together.

Medium-sized companies, lacking algorithm auditing teams or technology ethics offices, are particularly vulnerable to this pattern. They adopt the tool for its legitimate speed benefits and assume that if it comes from a recognized provider, the bias issue has already been resolved. It hasn’t resolved; it’s been outsourced.

The Network Supporting the Tool Matters as Much as the Tool

There’s another angle to this launch that technical analyses tend to overlook. Anthropic simultaneously announced the expansion of its experimental division led by the co-founder of Instagram and the addition of a new product lead from the field. This is a network of strategic links built on cross-reputations, shared histories, and trust accumulated within very specific circles of the Silicon Valley tech ecosystem.

This network has value. It also has very concrete geographical, cultural, and sectoral limits. A network of social capital built within a single perimeter does not detect signals from outside that perimeter. For companies operating in Latin American markets, southern Europe, or Southeast Asia, this implies that the product design priorities will rarely reflect their specific operational frictions: different legal frameworks, distinct labor structures, management cultures that do not align with the Anglo-Saxon assumptions underlying the pre-built modules.

The promise of customization via private internal markets is real, but it has a starting threshold. And that threshold was built from a determined place, by determined people, with specific experiences. Companies that understand this will be able to extract value from the system; those that don’t will uncritically adopt a set of assumptions that do not belong to them.

The decision to integrate AI agents into HR, finance, or legal processes is not just a technical decision. It’s a governance decision. It involves choosing which criteria to automate, which flows to accelerate, and, by omission, which perspectives to exclude from the decision-making process. These choices have measurable operational consequences: in hiring quality, legal exposure, and ability to serve diverse markets. Organizations treating the adoption of AI agents as an IT decision rather than a board-level decision are miscalculating risk.

The Board That Didn't See Its Own Blind Spot

Anthropic’s launch is not the problem. It’s the mirror. It clearly shows that the next layer of corporate infrastructure—the agents managing hiring, evaluating credit, drafting contracts—are being designed by teams with very similar profiles, for clients that also tend to look alike, with data reflecting user behaviors that 50% come from a single sector.

Leaders adopting these tools without auditing the integrated assumptions will not be saving time. They will be delegating their most sensitive decisions to a system that was not calibrated for their specific reality. And when that system fails—because it will, at some point, in some process, before some candidate or client profile that didn’t fit the mold—the responsibility will not lie with the provider. It will lie with those who signed off on the adoption without asking the pertinent questions.

The next time the board meets to approve the integration of AI agents, it’s worth each member looking around. If they all arrived by the same paths, worked in the same sectors, and share the same reference frames, they already have the answer about the blind spots that board has guaranteed. And those blind spots are precisely what the system they are about to adopt will amplify.