The Inflated Metric That Could Cost Billions: The Performance Mirage in 2D Transistors

For nearly 20 years, 2D semiconductors have been a convenient promise: materials such as molybdenum disulfide (MoS₂) could enable ultra-thin channels, efficient switching, and a pathway for further reducing transistors as silicon reaches practical limits. This narrative has relied on lab results that, at first glance, seemed to demonstrate that a leap was imminent.

The problem is that much of that comparative evidence may be built on a testing architecture that does not represent a future that can be integrated into commercial chips. A study from Duke University published on February 17, 2026, in ACS Nano highlights an uncomfortable truth: the commonly used “back-gated” configuration, favored for its experimental simplicity, can inflate measured performance by up to six times due to an effect known as “contact gating,” which lowers contact resistance but imposes physical limits that conflict with industrial reality, including current leakage and speed restrictions.

As an Analyst of Diversity, Equity, and Social Capital, my reading of this is not moral; it’s strategic. When an entire field becomes accustomed to measuring "progress" with instruments that reward an illusion, risk does not distribute itself; it concentrates. The burden falls on the R&D portfolio, the technology roadmap, and the reputation of the leadership that chose metrics easy to celebrate.

The Duke Finding: How Experiment Design Changes the Reported Physics

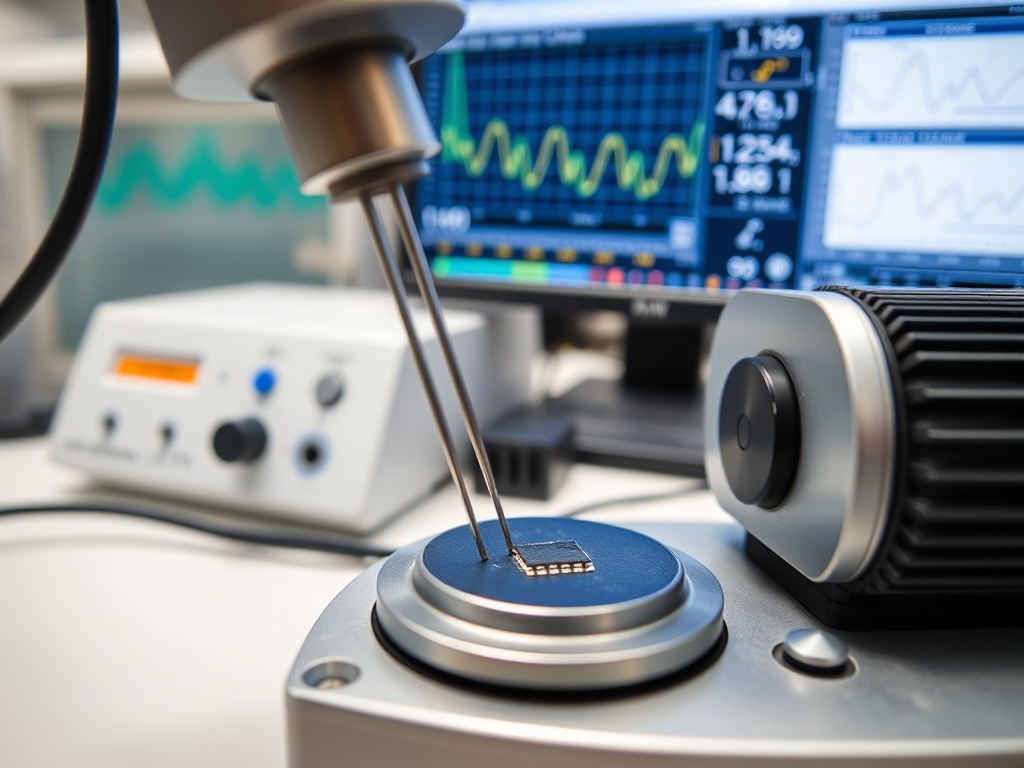

The team led by Aaron Franklin, with key experimental work from Ph.D. student Victoria Ravel, controlled for how 2D transistors behave when separating the gate that modulates the channel from the one influencing the contact regions. The “back-gated” architecture places the channel on a silicon substrate acting as a gate; this gate not only modulates the channel but also alters the metallic contacts that inject current. This “dual effect” is the heart of contact gating.

In business terms, the difference is staggering: the experiment is not measuring the material alone. It is measuring an architectural shortcut. The research manufactured a symmetric dual-gate design allowing independent activation of either a top or back gate on the same MoS₂ channel, isolating the effect of the contacts. In larger devices, performance doubled under certain conditions, indicating that architecture matters even before scaling.

But the message that drives decisions appears at dimensions relevant for future chips: with 50 nm channel length and 30 nm contact length, contact gating increased the “on” state current by nearly 70% and elevated reported performance by up to six times. Franklin said it bluntly: “Most reports of high-performance 2D transistors use a device design that isn't compatible with commercial technologies… and can significantly inflate performance.”

This is not an academic footnote. It is a reminder that the industry may be comparing apples to laboratory artifacts. And when budget, procurement, partnerships, and talent are decided upon distorted benchmarks, castles are built on sand.

The Economy of Technical Hype: When a Biased Benchmark Reshuffles Capital and Priorities

Hype is not born solely from marketing. In deep tech, hype emerges from structural incentives: publishing, demonstrating "state of the art," obtaining funding, and maintaining a narrative of continuity. If the quickest way to achieve "good" results is through a simple testing architecture —and that simplicity becomes standard— the entire discipline starts to optimize for the test, not for the product.

Duke places numbers on that distortion: up to 6x inflation is not a margin of error; it is a multiplier that alters portfolio decisions. Without specific financial data in the sources, the implication remains clear: the sector invests billions in semiconductor R&D and scaling paths where a percentage point of performance or energy shifts entire investment cycles. If part of the community has been celebrating improvements dependent on a non-integrable design due to leakage and speed issues, corporate risk takes three forms.

First, capital allocation risk: funding materials or approaches that look superior in the “back gate” but lose the advantage when moving to compatible architectures. Second, schedule risk: roadmaps that assume near technical maturity can be delayed when benchmarks are corrected. Third, reputational and governance risk: when a technology committee cannot explain why a performance leap disappears upon changing setup, the board's trust in R&D diminishes.

Franklin also points to a typical tension: “Amplifying performance sounds like a good thing… but… it has physical limitations that prevent it from being used in actual device technology.” Translated into C-Level language: the lab might be maximizing a KPI that the market won’t pay for. That is the most expensive kind of progress.

The Organizational Blind Spot: Technical Homogeneity and Closed Networks Normalizing the Error

Here appears my lens: the distortion is not solely electrical architecture; it is social architecture. For two decades, a benchmarking practice normalized. That rarely happens because “nobody knew.” It occurs because the networks validating knowledge —reviewers, reference labs, opinion leaders— tend to be closed and self-referential. When the network is too vertical, the power to define what comprises "good performance" concentrates.

The study describes a phenomenon that “affects most lab tests” and requires reassessing hundreds of previous studies. Such field correction necessitates more than a paper: it requires technical dissent capacity within the communities that set standards. In corporate organizations, that translates into teams that are not clones of education, incentives, and contacts.

A homogenous management team often fails in a specific mechanic: it confuses consensus with truth. If the technical table shares the same academic background, the same conferences, the same validation circuit, and the same “gurus,” the system becomes fragile to a methodological bias. There is no need for malice. It suffices with a reputation circuit that rewards “comparable” results and punishes deviating from the dominant setup.

The operational lesson is uncomfortable: useful diversity in deep tech is not cosmetic. It is diversity of discipline (manufacturing, design, integration, reliability, manufacturing), diversity of incentives (research vs. product), and diversity of networks (people who do not depend on the same social capital to progress). In execution terms, contact gating acted as a “shortcut” for years because it was easy to use and yielded attractive numbers. Closed networks convert those shortcuts into dogma.

When Franklin states “we need to be honest about how device architecture shapes what we measure,” he is indirectly highlighting a knowledge governance failure: if the measurement standard rewards an illusion, the entire ecosystem runs in the wrong direction.

What a C-Level Should Demand Starting Tomorrow: Integrable Benchmarks and a Technical Network That Can Say No

The value of the study is not to discourage 2D research. It is to force a change in discipline: separate material discovery from testing architecture and compatibility with commercial integration. Duke proposes a basis: designs like the dual-gate for fairer and reproducible evaluations. Furthermore, the team plans to scale contact lengths to 15 nm and test alternative metals to reduce contact resistance within compatible constraints.

For C-Level leaders, this becomes a control checklist, not an academic discussion:

- Reevaluating R&D KPIs: demand that any “record” of 2D performance be accompanied by the setup and an explicit explanation of whether the architecture is integrable or merely demonstrative. The figure without context is no longer evidence.

- Validation Governance: enforce cross-reviews with profiles not captured by the same publication circuit. This includes manufacturing engineers, reliability experts, and individuals who have experienced technological transitions where benchmarks collapsed during industrialization.

- Social Capital Architecture: build horizontal relationships with labs and teams that can counter the dominant narrative without bearing the reputational cost of “departing from the standard.” In hard innovation, the most valuable network is not the one that applauds first; it is the one that detects failure early.

The semiconductor industry cannot afford two more decades of optimization for the laboratory showcase. The message from Duke is a call to maturity: measure as you manufacture, and manufacture as you sell.

The mandate for corporate leadership is straightforward: at the next board meeting, the C-Level must look around the table and recognize that if everyone is too alike, they share the same blind spots and are positioned as imminent victims of disruption.